Bandwidth management(BW) in traffic-engineered (TE) networks is often discussed in terms of specific protocols or architectural models. Yet before debating different architectural models or protocol specific details, it is useful to step back and understand the functional problem being solved. At its core, bandwidth management is a control loop. It observes network conditions, interprets those observations, makes decisions about resource allocation, and enforces those decisions in the control/forwarding plane. When this loop is examined in stages rather than as a single feature, the functionality becomes much clearer.

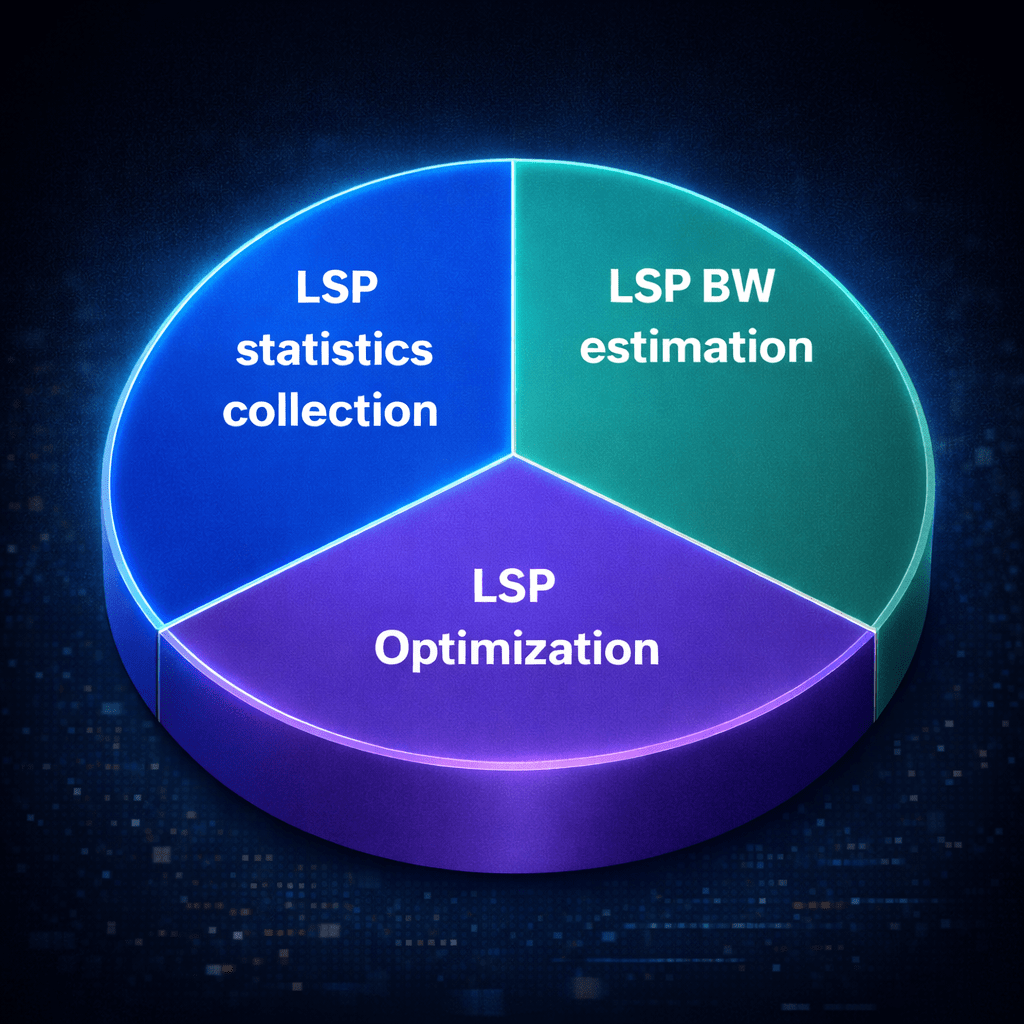

The process can be understood as three connected functions: statistic collection, bandwidth estimation and prediction, and TE tunnel optimization. Each stage answers a different question. The first asks what is happening in the network.

The second asks what BW should be allocated based on those observations. The third determines what actions must be taken to align network resources with demand. Treating these stages separately helps transform the discussion from one about protocols to one about system behavior.

Statistic Collection: Observing the Network

Every BW management system begins with measurement. Routers and forwarding devices continuously generate operational data, including interface counters, per-tunnel statistics, queue occupancy information, drop metrics, and the current state of reserved and unreserved bandwidth. These measurements form the foundation of the control loop. Without accurate and timely observation, any subsequent estimation or optimization will be flawed.

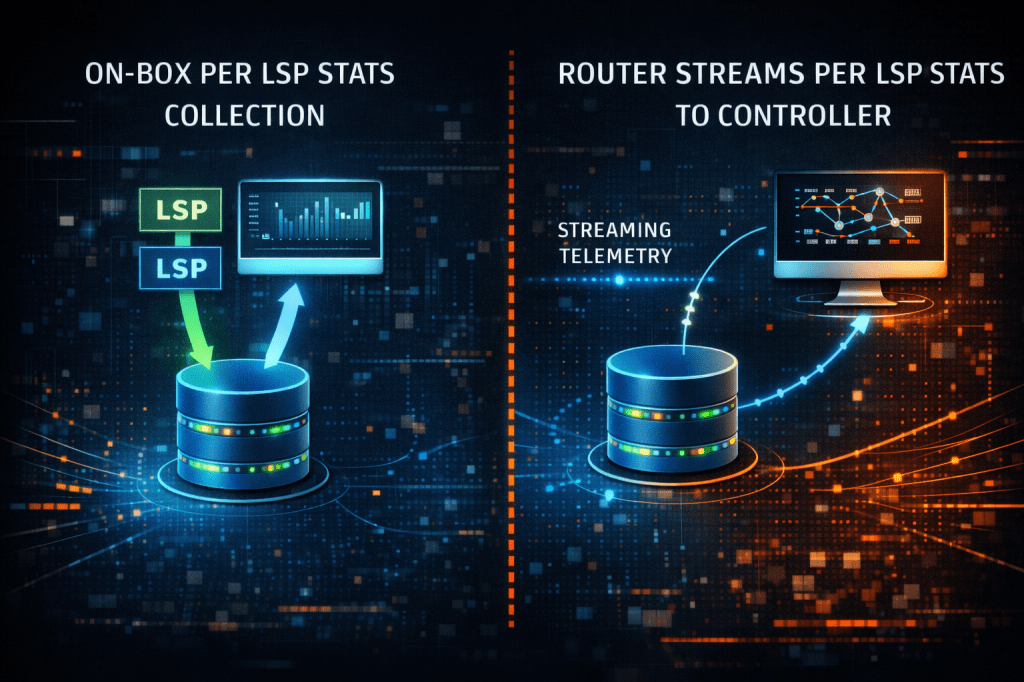

One of the primary architectural considerations in this stage is where measurement data is processed and retained. In some designs, statistics remain primarily on the device. Routers measure traffic and retain short-term history locally, enabling rapid and deterministic decisions. This approach minimizes dependency on external systems and allows bandwidth adjustments to occur even if connectivity to centralized platforms is temporarily disrupted. The tradeoff is that local devices have limited storage and limited visibility beyond their immediate perspective. While they can respond quickly to changes, they do not inherently understand broader network-wide trends.

In other designs, devices export telemetry to external systems. Off-box collectors, controllers, or analytics platforms may aggregate data across the entire topology. This enables long(er)-term historical storage, cross-domain correlation, and possibly, more, advanced analytics. Telemetry export can occur, like the on-box example above, through periodic retrieval or continuous streaming. Periodic retrieval is straightforward and widely supported, but it is constrained by polling intervals and may miss short-lived bursts. Streaming or event-driven telemetry provides higher resolution and is better suited for automation, though it introduces new considerations around data volume, transport reliability, and collector scalability.

The key educational insight is that the structure of the measurement layer determines what kinds of estimation and optimization(s) are possible. Limited historical retention and localized visibility tend to result in systems capable of reacting fast to a changing environment. Rich historical datasets and global visibility make predictive techniques feasible. The quality and placement of telemetry set the ceiling for the rest of the control loop.

Bandwidth Prediction: Making Sense of the Data

Measurement alone does not allocate bandwidth. The [time series]data must be translated into a decision about how much bandwidth should be reserved for a tunnel. This transformation is the role of the BW estimation layer.

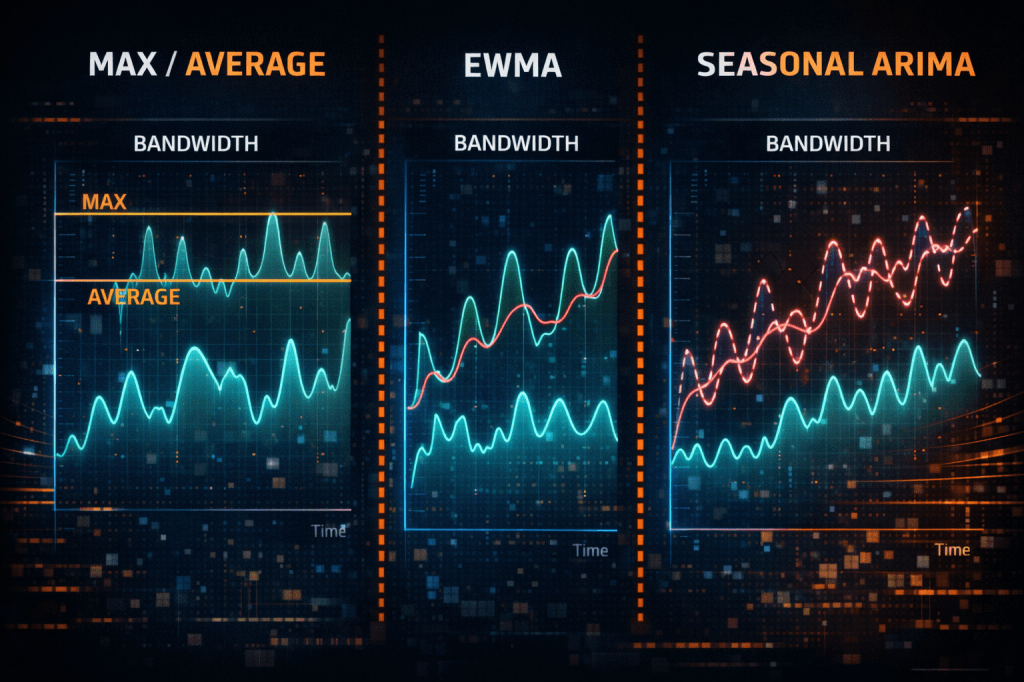

BW estimation techniques range from simple and conservative to mathematically sophisticated. Some systems rely on peak-based approaches, such as using the maximum observed utilization or a high percentile over a defined measurement interval. These models are straightforward and emphasize congestion avoidance. They tend to err on the side of over-allocation, sacrificing absolute efficiency in exchange for confidence and stability. Because they are easy to reason about and computationally light, they are attractive in environments where deterministic behavior is paramount.

To reduce sensitivity to transient bursts, many implementations employ smoothing techniques. A simple maximum average or moving average reduces volatility by averaging utilization over a fixed window, though it can introduce lag unless techniques to quickly identifying sudden changes are implemented. An exponentially weighted moving average (EWMA) places greater emphasis on recent samples, making it more responsive while still filtering noise. These techniques are particularly common in operational networks because they are computationally lightweight, stable, and easily tuned. Importantly, they can be implemented directly on network devices without requiring substantial processing resources. They respond to observed traffic changes rather than anticipating them.

More advanced systems incorporate predictive modeling. Forecasting methods such as seasonal ARIMA or Holt-Winters analysis attempt to capture diurnal cycles, weekly patterns, and long-term growth trends. Such machine learning approaches may incorporate additional variables to refine predictions further. These predictive models may improve bandwidth efficiency by predicting future BW adjustment needs if sufficient trends and patterns are present in the observed data. The cost of this sophistication lies in the need for historical data storage, model training, and increased computational requirements. As a result, predictive estimation is often performed in centralized analytics systems rather than directly on routers.

A system may not use one model exclusively, seamlessly switching between different models (a mathematically complex model and a simple max average model) based on performance metrics combines the best of both worlds — in other words, a system need not be rigid about a specific model.

An important lesson emerges from this stage: the mathematical complexity of the BW estimator/predictor influences where it can be executed. Simple estimators can operate locally and continuously. Predictive models generally require off-box processing. This is not a matter of architectural preference but of practical feasibility. The mathematics of estimation/prediction drives the placement of computation.

Tunnel Optimization: Acting on the Estimate

Once a BW estimate has been calculated, the network must decide whether that estimate should result in a change to the existing tunnel(s). This stage transforms analysis into action. The network evaluates whether tunnels should be resized, rerouted, merged/split, or potentially preempted in order to align allocated capacity with observed demand.

A critical step in this process is determining whether the new estimated BW actually justifies a modification to the tunnel by evaluating it against defined adjustment rules. These rules typically include thresholds that define the minimum change required before a tunnel is modified. For example, a system may require that the estimated bandwidth exceed the current reservation by a specified percentage before triggering a resize. In addition to or alternatively, the system may enforce an absolute change threshold, such as requiring that the estimated BW differ from the current allocation by a minimum number of megabits per second.

These adjustment thresholds play an essential role in maintaining stability. If the thresholds are too sensitive, tunnels may be resized frequently in response to brief fluctuations. Each resize event can trigger signaling activity, temporary path changes, or make-before-break operations that introduce additional control-plane load and network churn. If the thresholds are too conservative, the system may fail to react quickly enough, increasing the likelihood of congestion.

Designing these thresholds therefore becomes a balance between responsiveness and stability. The goal is to ensure that only meaningful and sustained changes in traffic demand trigger network reoptimization.

When a BW change does justify action, the network must also determine whether the tunnel should remain on its current path or be moved to a new path. Thus path recomputation introduces a second decision problem: determining whether a candidate path is actually better than the existing one. In traffic engineering systems, “better” rarely means simply shorter. Instead, the evaluation must consider available bandwidth, policy constraints, and the resulting utilization across the network.

Once these checks are satisfied, the network can proceed with path optimization. This may involve resizing the existing tunnel, signaling a new reservation, or performing a make-before-break operation to migrate the tunnel to a different path while preserving forwarding continuity. Admission control mechanisms ensure that the updated reservation does not exceed available capacity, and priority policies determine whether lower-priority traffic must be displaced if resources are constrained.

In practice, this stage represents the point where estimation policy meets operational stability. The thresholds that govern bandwidth adjustment and the criteria used to evaluate alternate paths determine how aggressively the network adapts to changing traffic patterns. When tuned correctly, these mechanisms allow the network to respond to real demand shifts while avoiding unnecessary oscillations in the control plane.

Within the broader control loop, tunnel optimization and enforcement serve as the mechanism that translates measurement and estimation into tangible changes in network behavior. The effectiveness of this stage ultimately determines how smoothly the network adapts to evolving traffic conditions.

The Continuous Feedback Cycle

When viewed as a whole, BW management in TE networks forms a continuous feedback cycle. The network measures its current state, estimates appropriate BW allocations, optimizes tunnel placement, enforces the resulting decisions, and then measures again. Each stage influences the next. Measurement quality affects estimation accuracy. Estimation sophistication affects computational placement. Optimization authority affects convergence behavior and stability.

This systems-oriented perspective helps clarify discussions that might otherwise become protocol-centric. Bandwidth management is not defined by a specific signaling mechanism or controller model. It is defined by how effectively the observation, estimation, and enforcement loop operates over time. A well-designed TE system does more than allocate bandwidth; it continuously observes, adapts, and refines its decisions in response to changing network conditions.

Leave a comment